Proof of Humanity

Goodhart's law for authenticity.

Trying something new where I quietly publish drafts online, and let them sit for a while before emailing or posting about them. You’re early to this one - comments welcome!

At some point recently, being human became something you have to prove. A style of sentence you’ve written for years, that you picked up from reading too much Didion or Tim Urban suddenly looks suspicious. Someone posts a long note or essay and before you consider what it says, you wonder if they even wrote it.

Every creative act now comes with a second job: authenticating the creative act.

Writers are starting to post AI detector scores like purity seals: “100% Human Written.” Or they’re declaring their process as pure: “not written with AI.” It’s anticipatory defense or subtle flex or both. Others are admitting AI use upfront, with all kinds of qualifications about when and how and what to think of it. We've flipped from default trust to default mistrust and from default confidence to fear.

I’ll admit I find it quietly sad, and yet, I’ve indulged too. I’ve clarified that the emdashes I use are human-inserted. I’ve run others’ writing and my own through the tests. I’ve felt the satisfaction of validating something bad as AI, and I’ve been shocked when words I spilled out in a fit of inspirational rage or wrestled into pithy submission were marked suspicious. (I proceeded to seek out another detector for a second opinion like I was on trial.)

Detectors can’t definitively distinguish between someone who used AI and someone who just hasn’t shed the human patterns that AI has learned to imitate. At first, the editing touches from AI review seemed helpful, but now they’re consistently too heavy-handed. Regardless, the effect is the same. Over-trained styles are eliminated, and writers skew toward today’s ‘human-safe’ zones.1

The ugly truth is that the moment you run your work through a detector you’ve given up something. You’re not asking if it’s good but if it passes. And you’re implicitly saying that if it doesn’t, you’ll change it until it does.

The detector becomes the editor. Goodhart’s Law says when a measure becomes a target, it ceases to be a good measure.

~ professing humanity or the opposite ~

II

The AI detector economy will thrive with the AI acceleration. Selling shovels. Thematically, VCs are filing these tools under ‘cognitive security.’ Tools that offer protection from AI slop, from manipulation, and from bigger threats surely on the horizon. I see the opportunity and value at scale, especially when humans need protection from bad actors.

For what I consider individual “consumer” writing, I’m more skeptical. What is the balance of good and bad?

Substack just added a slop report button and, on Twitter, it’s becoming the norm to tag AI detection tools for sloppish posts. It’s a public trial on demand.

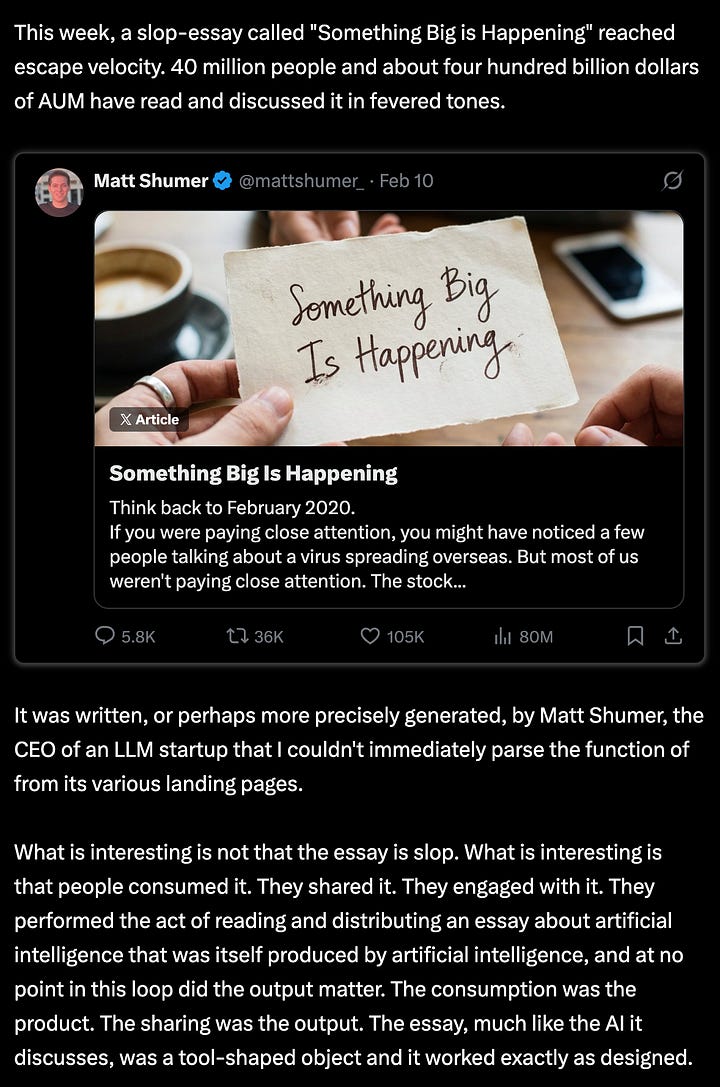

The very online tech crowd is ramping up this discourse: Twitter’s $1M Article contest unleashed longform essays, many of them suspicious. Something Big Is Happening hit 80 million views by analogizing AI to COVID.

Will Manidis cited it as a kind of Tool-Shaped Object — AI slop that served its purpose just by existing — it gave people a narrative to latch on to without thinking too hard. “The consumption was the product. The sharing was the output.” Yet the most prominent AI detector on Twitter scored the essay 100% human written.

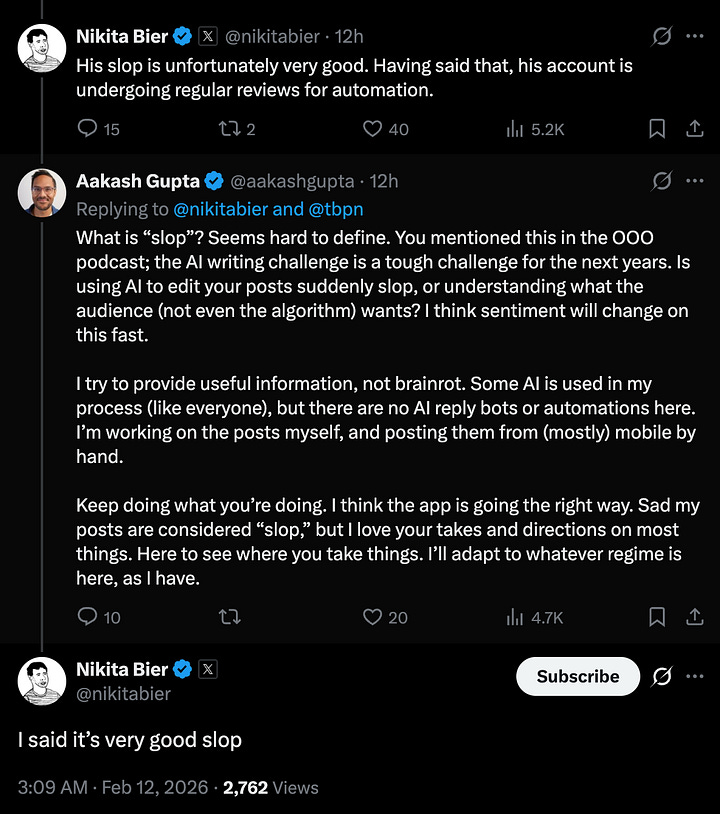

Another niche tech Twitter example: Trung Phan called out Aakash Gupta for posting dozens of longposts a day on trending topics. Nikita called it “very good slop” (and the account is under review for automation).

AI detection wars aren’t new on Substack. Every few months a fast-growing writer triggers the neighborhood sleuths. Stepfanie Tyler’s essay on taste went mega-viral and the big reaction was to label it AI-generated. She leaned in with a follow up essay — It’s My Party and I’ll Use AI If I Want To. Honestly, smart.

III

People get mad. It fascinates me to think about why. The underlying frustration isn’t “this is fake.” It’s more like “I gave you my attention and you didn’t earn it.” I spent my time reading this and you spent zero time writing it.

The insult is the effort gap.

You could argue none of this should matter. That quality is quality regardless of method. But that’s not how human attention works.

Attention is a relationship, not a transaction.

And that prompts bigger questions beyond AI:

What do we owe each other when we ask for someone’s attention?

And how does the contract change when effort becomes invisible?

The plastic surgery analogy is helpful here. Nobody’s mad that someone looks good. They’re mad if they feel deceived. Maybe they compared themselves to someone who wasn’t “natural” or gave a compliment that wasn’t earned (whatever that has historically meant). But our culture has mostly gotten over this. Plastic surgery and the full spectrum of aesthetic treatments are far less secretive and far less taboo now. The one big difference: beauty is taken in passively but reading asks the audience to participate and to buy-in. More investment is more betrayal.

This is why the discourse triangulates to detection instead of quality. Quality is more subjective and hence harder to weaponize. A verdict that something’s AI gives language to a frustration about something deeper.

People are also lashing out at the feeling that everything they read sounds the same, AI or not. For one, there’s just more ‘content’ flooding the whole system. The burden of parsing good from bad is greater than ever.

My theory is that another source of frustration is that so much of media now is recursive — both subject and style are deeply intertwined with other media instead of reality. It is the ouroboros of modern inspiration. This is one reason I think social media at large, Substack included, feels so monotonous right now.

From my recent post on taking a social media sabbatical:

Media is increasingly about media and the recursion is exhausting. We talk about how ‘bad’ social media is for mental health because of addiction, envy, rage-bait, all the digital vices, but I think the self-referential nature of every discourse cycle is the silent disease. At least half the essays I read here are a response to another essay responding to another essay responding to … and that seems true of the takes on every platform. It’s a kind of lazy, narcissistic echo chamber increasingly disconnected from reality, lived experience, and novel investigation. It’s both energy-draining and uninspiring.

The dead internet theory feels validated more every day. For a while we’ve been saying the crumbling of the commons will force us to retreat to private communities and curated sources, which does seem likely. But at the same time, people innately want to discover new people and new ideas and new voices. How much proof of humanity we’ll need in order to stick around in public forums is still an open question. How we’ll protect these spaces and engender enough trust is another.

IV

In the movie Inception, corporations hire teams to break into people’s dreams and steal their secrets. So the targets are forced to hire dream security — guards trained to detect and repel intruders. But secret extraction is a distraction from the real threat: inception — planting an idea so deep in a person’s subconscious that the person thinks it was theirs. In the process, the detectors plant something else too: doubt.

Suspicion machines run on doubt and produce more of it.

We are all humanity detectors and we always have been. We’ve absorbed the culture of lip syncing, steroids, ghostwriters, and yes, plastic surgery. Fakery is cheap now. We’ve been burned enough that suspicion seems rational. And we’ve always loved catching fakes. The AI detector is the latest tool in a very old game.

We’re ‘othering’ AI to protect the value of human work. Because if we don’t, we’d have to depend on merit alone and on humans to recognize it. Merit is a scary place to live. And we don’t trust our eyes now.

So we invent proxies for ‘real.’ We optimize for them. They work briefly, then become legible, then become solved games. Then we invent new tests. The cycle repeats, and faster each time, because new technology makes both the catching up and the counterfeiting progressively easier.

Every new proof of authenticity becomes the new minimum. The new floor eats the old ceiling. The pattern is always the same: the moment authenticity becomes legible, it becomes gameable. It’s Goodhart’s law for authenticity.

V

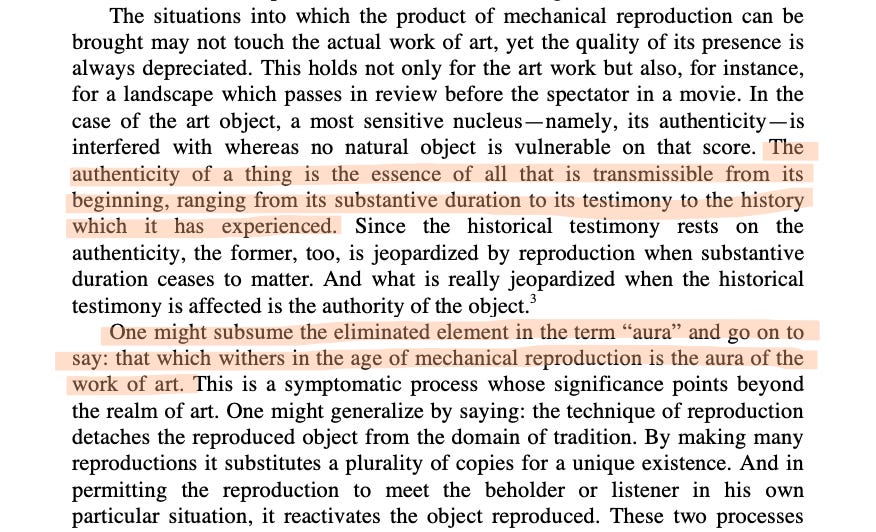

Walter Benjamin argued that mechanical reproduction erodes aura. We’ve moved past that. We’re now in the age of mechanically reproducing aura itself, so we’ve moved on to looking for the next rung on the ladder of proof of humanity.

Aura used to be embedded in the object. Now it has to be embedded in the person. The personalization of proof is the new frontier.

Effort was once legible — proof of work — but that’s fast eroding. Next comes proof of process or proof of craft. We’ve taken to performed transparency. Sharing off-duty looks and behind the scenes content. Founders building in public. If a tree falls in the forest and no one’s around to stream it, did it fall?

We livestream everything now. We kill all latency between in and out. We satisfy the suspicious while forcing strong parasocial ties. No doubt this is contributing to livestreamers becoming so famous so fast; they’re performing realness by giving us extreme access into their daily lives, and doing extreme things to stand out from average realness.

But this still isn’t bulletproof. Once transparency is legible, it too starts looking and acting like a costume.

The infrastructure of proof erodes our capacity for belief. We forget what it felt like to consume without suspecting or create without bracing for suspicion.

If effort is now diminished, if it can be hidden, and proof will soon be gamed too, if transparency itself can be performed — what’s left?

Two things: distinctiveness, and desire. Distinctiveness will be harder to achieve than ever, but if you can, it’ll catapult you to the top. The only other thing left that can’t be gamed seems to be wanting something badly enough that it shows. Desire is the closest thing to proof of authenticity we have left.

Desire is too high-dimensional to fake well. Not borrowed desire or socially acceptable desire or safe ambition. The kind that visibly proves itself yours. Independent, embarrassing, and raw. (I hate to talk about Clavicular, but his unsavory yet blatant desire to maximize status based on looks is an example of revealing desire as authenticity — and he streams himself living out that desire.)2

To want something visibly is to risk failing visibly. That’s why most people hedge. They want things privately and with plausible deniability built in. And beyond public desire, you need a why, a living story that takes shape in private desire.

When Timothée Chalamet says he's on a quest for greatness, you can argue it's sincere or strategic. It doesn't matter. You believe he wants it. There's no reason to fake that desire, we think, only to hide it. And like it or not, this is why people label Donald Trump authentic too. His whole personality is telling you what he desires and telling you why he deserves to get it. You can hate what he wants and hope he doesn't get it, but you cannot deny that he wants it.

Duration is the final filter. People chasing rewards leave when rewards dry up. Sustained obsession is hard to fake. If you do fake it long enough to become indistinguishable from someone who has it, you’re not faking anymore. But to really sell people on it, you’ll have to be extreme. That’s the new world. Or you can take your chances with the idealistic approach — let the work speak for itself.

Just don’t show me a test that says you’re real. The only thing I know when you show me a 100% Human score is that you wanted me to know you were authentic. All you’ve proven is your desire to prove something. The most human trait of all.

In the end, authenticity is a lot like love. You can say the words. You can perform the gestures. But you can’t prove it. The proof is in our experience of the thing itself — or it’s nowhere. The moment we can’t recognize humanity without a machine to verify it, we’ve already lost ours.

If you enjoyed this essay, consider sharing it with a friend or community that might enjoy it too. Email me here or DM via Substack or Twitter / X. Other drafts I’m hoping to publish soon on: the future of work, health, status, and social structures.

Of course not all slop is AI. Some is just human slop. But the detectors collapse a spectrum of quality (bad, derivative, formulaic, brilliant) into a binary: human or not. The moment one essay needs a seal, every essay without one becomes suspect.

Extreme actions in service of extreme desires inevitably read as strategic, but typically there’s no sugarcoating the desire to accrue money, power, fame, etc. That’s the real visible desire.

I want to note that Pangram actually said the article is 32% AI!

https://x.com/mattshumer_/status/2021304532710424738?s=20

The screenshot in the post is Pangram replying to the question "how much of it is AI?" not to the article itself.

Love this! I’ve been thinking through the exact same concept, as part of a broader reflection that trust-building will be so foundational in the post-information age. So, I was thinking about what really establishes trust and was considering this same idea: authentication as a precedent for authenticity. I like “desire” as the fundamental differentiator… I’d been thinking it might be a coherent POV/strongly held opinion/contrarian take. Similar root: Are you willing to accept the costs of your passion (embarrassment) or perspective (reduced optionality)? That signals commitment, which builds trust. But either way: would definitely emphasize your final point… whatever the proof is, it’s entrenched through repetition and duration. I think proximity required is inversely proportional to duration required, when it comes to building trust.

All that to say: Really enjoyed this essay and love that you’re doing public drafts!