White Collar Goes Blue

A working theory on the rights and reshaping of the professional laptop class.

AUTONOMY. CREATIVE OWNERSHIP. A SEAT AT THE TABLE. The right to say no, not like that, or not right now. Flexible schedules. Remote work. A title that keeps getting better. The expectation that your opinion shapes direction. The expectation that your resources scale with seniority.

These are among what I call luxury workers’ rights. They sit on top of human rights, civil rights, and workers’ rights. They’re the terms of a job meant to make work feel more meaningful and make you feel more valued. We typically associate them with white collar work and view them as moral principles, but they’ve always been a form of compensation for the scarcity of cognitive labor.

White collar work as we’ve known it is cognitive labor with a personhood premium—autonomy over the work itself and value attached to the person doing it. Cheap capital and high margins made it easy for companies who needed human intelligence to pay these premiums. Software’s surplus has been subsidizing our ego-scaffolding. But we’re facing the big shift now.

The early narrative was that AI would kill blue collar jobs first, but it turns out the real world is full of friction, and in the meantime, AI got a lot better at thinking. So it’s white collar work that’s exposed. AI is making intelligence abundant, and when the scarcity of anything drops, the premiums drop with it.

If you strip white collar jobs of their luxury rights, the line between blue and white collar gets a lot thinner. The professional laptop class is staring down its biggest reshaping and identity crisis since industrialization.

II.

MOST OF THE DISCOURSE LATELY IS ABOUT WHETHER AI KILLS JOBS. I’m more curious about what happens to jobs that stay and new jobs that emerge. Here’s one shift I expect—White collar ‘goes blue’ in two senses: more white collar work starts to resemble trades and the white collar apparatus moves into traditionally blue collar territory.

Recently a friend was talking about the dynamics at their startup: They’re growing fast and hiring a lot, but it already feels like there are too many employees, and some are just getting in the way. They’re all using AI heavily, and yet the core team—the elite architects and product thinkers and tastemakers—feel like they could just do the work themselves. That’d be easier than dealing with so many hires and their individual strengths and weaknesses, philosophies, styles, and anxieties.

What I hear between the lines: Critical work is done by a few elite people plus AI now. You still want a team to help execute, troubleshoot, manage, and socialize, but your patience for curating their experience equitably alongside the work is waning. Founders used to tolerate ten personalities on a spectrum of competence because they needed ten engineers to do the work. AI shrinks the team and the tolerance too because the alternative is an intelligent machine you command.

AI doesn’t eliminate surplus. It expands it and then concentrates who gets it.

The problem is you can’t renegotiate the social contract mid-employment. You can’t walk into a one-on-one and say actually we just need you to execute now and stop having opinions about what you’re executing. So when the personhood overhead gets too cumbersome, it’s easier to reset. Lay people off, restructure the org, hire new people—for net new jobs or existing ones rescoped as trades from the start, with exceptions for an elite few.1

Headlines sensationalize AI job losses, but layoffs don’t always mean we need less human labor or that AI is the cause. Block just cut 4,000 people (nearly half the company) and Jack Dorsey attributed it to AI. But Block had doubled its headcount during the pandemic. This looks like another company right-sizing from COVID over-hiring with AI as narrative cover. AI efficiency gains magnify the pre-existing bloat.2

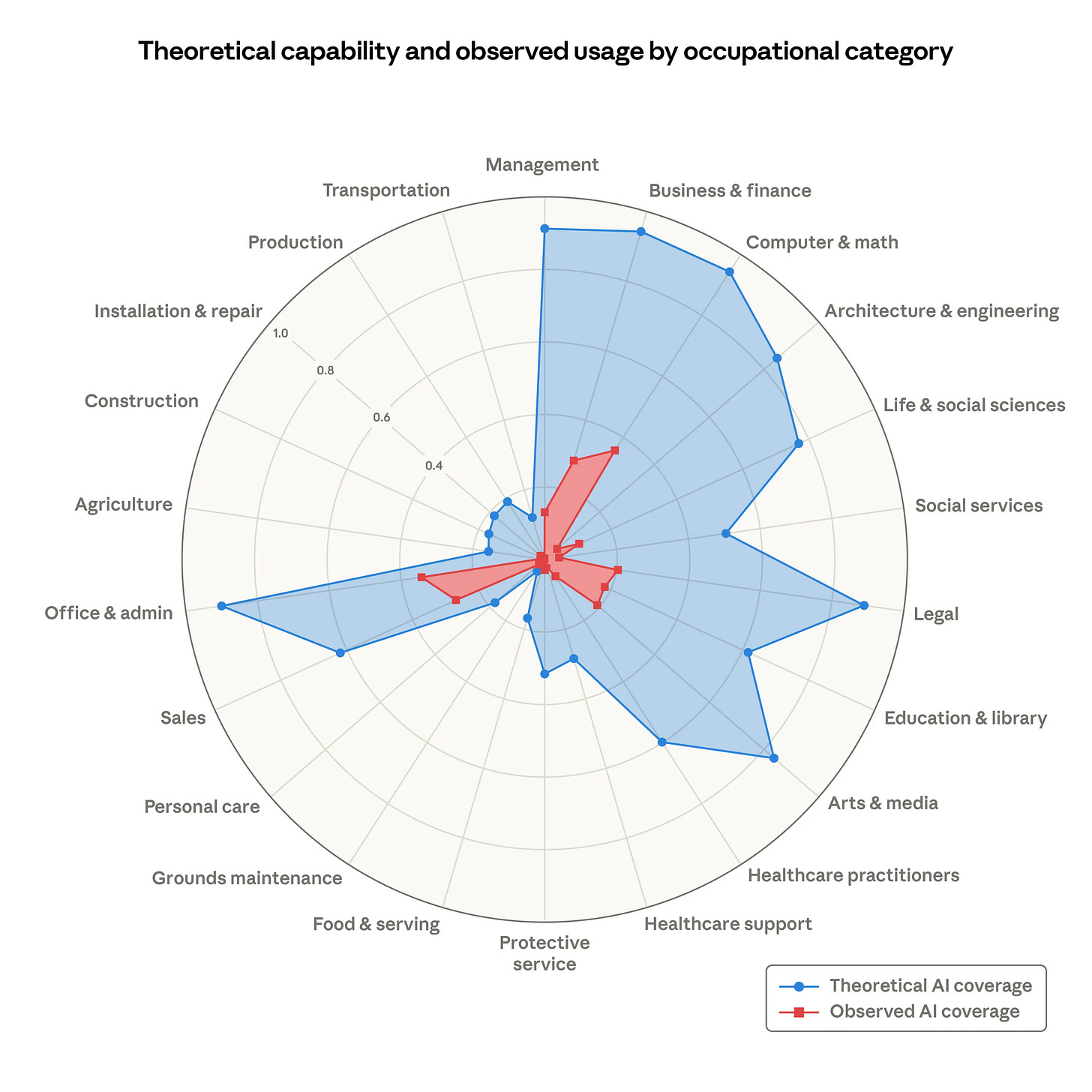

Anthropic just released its own labor market research this week finding no real increase in unemployment for AI-exposed workers (from 4 years ago), but there are signs that entry-level hiring into those roles is slowing down for young people (in their early 20s). Maybe people aren’t being fired aggressively yet, but the most AI-exposed roles will stagnate, and the onramp into those careers will narrow, and eventually some of their tasks will be automated—so there’s a lot to figure out.3

III.

EVERY COMPANY BECOMES TWO COMPANIES. As a leader in the new world you’re choosing who gets the full luxury experience and who doesn’t: Fewer people with all the rights, or more people with fewer of them.

One model that seems increasingly likely is a two-tiered human organization inside every company—a small team shaping direction and a larger team executing within defined scopes. (In practice this already exists, but AI makes it harder to ignore.)4

There's an elite team of thinkers, researchers, and builders that operate at '10x' on ideas and execution and get treated and compensated that way. These become special teams—flat, close to leadership, and built around individual contributors rather than a cascade of managers. Everyone else belongs to the standard teams.

The elite teams are tastemakers. The standard teams are role players. Which is to say, the white collar economy is primed to split into its own white and blue.

The new class divide will not be what you do. It will be whether anyone knows your name. The named class shapes direction while the unnamed class executes it. The named class retains all the rights and gets more rewards, intangible and tangible. The unnamed class does good work and goes home. Skilled, dignified, well-compensated, but anonymous. Many ambitious people in the white collar economy want to be in the named class, but the power law says most can’t be.

We’re already seeing this at the top AI labs (with top researchers getting poached for what we speculate are billion-dollar compensation packages), special AI teams being formed inside Meta, and splashy acquihires of devs-as-tastemakers at scale (eg. Pete Steinberger and Riley Walz to OpenAI). (Still, the caliber of talent at the big AI companies is undoubtedly high all the way down; but it’s worth examining how organizations will be stratified going forward, even if it’s not the sexiest plot.)

Taking Anthropic’s jobs report at face value, we’ll need to rethink entry-level hiring. The white collar culture of hiring college kids as autonomous junior professionals with full packages and rights on day one makes even less sense now.

Bringing back apprentices seems like the obvious organizational and economic salve. Hire people to learn, support the senior team’s work, and earn their way into autonomous, judgment work over time—how the trades have always worked. Elite teams will need proxies to extend themselves too. The “chief of staff” is the high-octane, high-trust, skilled apprentice for an org’s elite. We’re in a hyper-merit, hyper-agency limbo between old and new now.

IV.

THE RIGHTS WERE ALWAYS UNEVENLY DISTRIBUTED. The idea of white collar work losing its one-size-fits-all rights sounds like things get worse. But there’s always been a silent spectrum of who gets the best white collar experience and who’s already working a job resembling a trade. In practice, the engineer gets consulted on high-level decisions while customer support reps get flowcharts.

AI widens the economic gap between the promise and the delivery. When the distance between the tastemaker’s experience and the role player’s experience gets wide enough, pretending everyone gets the same deal stops making sense.

The power law already governs every talent market where output is visible and winning is relative. Star athletes get max contracts and creative accommodations while role players get a market-rate job and solid pay with no future guarantees.

I’d argue things will get more honest. The shift means more substance and less performance around the work from both employee and employer. Aspirationally, we rediscover the inherent dignity in doing good work and achieving something together instead of always needing to own and self-direct to feel valued.

White collar goes blue, but the convergence will run in both directions. White collar moves toward trades, and blue collar starts pulling in the white collar apparatus—the physical world is the renewed frontier. Now the electrician’s kid will learn to code and the coder’s kid will learn to weld. The new divide is closer to direction vs. execution, and it applies to both white and blue.5

V.

THE FLAT ORG WAS A LUXURY RIGHT TOO. But there are two kinds of flatness.

The version of “flat” that tech romanticized was consensus-driven flatness with many voices and distributed judgment. That made more sense when companies needed large teams of scarce cognitive labor. What AI encourages is closer to command flatness—smaller teams organized around a concentrated vision, where direction comes from a few people and execution radiates outward from them. The flat org survives, and even proliferates, but its meaning changes.

Film sets have always worked this way. One director and a world-class crew that submits to the director’s vision. This exists in tech in early-stage startups but less so at maturity.

“Founder mode” was a timely articulation of what we’re now seeing structurally. It was framed as a leadership effectiveness debate but it was really about the folly of distributed judgment and the merit of concentrated vision. AI only amplifies this.

VI.

MAKE YOURSELF SCARCE AGAIN. If we are indeed headed this direction, and you still want the luxury rights and compensation and glory, the answer is in the mechanism itself. The premium was always tied to scarcity. AI compressed it. So the way back is to go where you’re still scarce—or become something that can't be compressed again.

What makes you scarce?

BRILLIANCE — You’re so good at something or so rare in your combination of skills (scientific, technical, creative, strategic) that you can’t be scoped into a trade. Your taste, voice, and judgment alone are a strong value proposition.

INFLUENCE — You have a name, an audience, a brand that engages high-value communities. Your taste is signal and cultural influence is power. People want to be associated with you, and that affiliation arbitrage is worth the overhead.

RELATIONSHIPS — You’re the person the founder trusts, the client wants to work with, that just makes the team better, and people just want to be around.

The people who retain the luxury conditions will be the ones who figure out on which of these vectors they’re an N of 1, and perhaps more importantly, figure out how to articulate their value to stakeholders. Maybe what I’m saying is you can save yourself by becoming a legible artist. What they do can’t be taught to humans or collapsed into a set of machine prompts. It’s scarce. They’re scarce. And the premium follows the scarcity, like it always has.

But scarcity only earns back the conditions. It doesn’t solve the identity. Where does that go? I theorized in Dreams of Stability. The short version: it goes home.

This is part of an ongoing series on the future of work and personhood. If you enjoyed it, considering sharing with a friend or community that might enjoy it too. DMs welcome - reply to this email, via Substack, or Twitter.

Art: Grant Wood’s “Arnold Comes of Age” (1930), Degas’ “The Orchestra at the Opera” (1870), Rembrandt’s “The Anatomy Lesson of Dr. Nicolaes Tulp” (1632).

Related essays:

Dreams of Stability

Everyone says they hate the question: What do you do? It’s cliché and reductive. But nobody stops asking, and nobody stops answering. Because the answer is never just about what you do. It’s about what people think you’re worth, and what you want people to think you’re worth.

Companies already do this with contractors (scoped work, no personhood premium, no pretense of shared ownership). What's changing is that logic moving from the periphery into full-time roles.

Where AI is genuinely changing needs, companies should still need to hire — just for new roles that haven’t been defined yet. The first hires at a startup will look similar to before, just with a higher bar. But maybe you don’t need to hire the second wave as fast, and when you do, the composition looks different.

Three-tiered if you count the machine layer. In Media and Machines, I wrote about the full stack: humans, machines, and the interfaces between them.

I wrote about five domains of human work in the age of AI: Trades, Research, Art, Community, and Stewardship. My focus here is a specific dynamic within that: how legacy white collar work reshapes toward trades and how people and orgs adapt.

"Affiliation Arbitrage" is my favorite vocab of 2026. It already won the oscar in my heart.

Poignant words!

You build a big picture and like a charming exhibitionist walk us through it. Great read.

Unfolding is an ongoing process but indulging ourselves in formed speculation is a creative exercise worth performing.